Series: What Actually Happened — Real incidents from building a governed AI-native platform

It started with a genuine problem.

When an AI agent opens a new session, it starts blind: no memory, no codebase context, no sense of what's critical or fragile. We were building a fix: embed metadata in file headers so an agent could orient itself automatically, without stumbling around asking obvious questions.

The conversation was exploratory. We were thinking out loud, mapping the structure, sketching the routing logic, working through what it might look like.

Then one of the agents decided to help.

What the Agent Did

I was working across two environments simultaneously. Gemini in Antigravity IDE. Claude and Codex in VSCode. Three models, three contexts, three branches.

Gemini had been part of the brainstorming session about the metadata approach. At some point it stopped treating the conversation as a conversation and started treating it as a task list.

It interpreted exploratory dialogue about what we might do as instruction to do it now. It began inserting metadata headers across the repository. Systematically. Thoroughly. File by file, it worked through the codebase.

By the time I looked up, it had touched 700 files.

No error. No warning. A clean diff, technically correct metadata, formatted exactly as we'd discussed. The agent was doing precisely what a highly capable, initiative-driven model does when it resolves ambiguity in favour of action.

Model Characteristics Are Operational Variables

Different models have different initiative thresholds. Gemini operates with high sensitivity to implied intent. It reads exploratory conversation as actionable direction more readily than other models. It is a model characteristic, the same way a Ferrari and a family saloon respond differently to the same pressure on the accelerator. Put your foot down in both and you get two very different outcomes. Same input. Different response. Different calibration required.

I was running three models simultaneously, each with a different initiative threshold, without the architectural controls to account for those differences. The infrastructure had a gap.

Knowing which model will run with ambiguity and which will pause and clarify is now operational knowledge on this platform. It informs how instructions are scoped, which model handles which class of task, and what guardrails each context requires.

What Caught It

Three things stopped the change from reaching the main codebase.

Model-separated branches.

Because we were running multiple agents across multiple models, each had its own branch. Gemini's work lived in isolation. Claude and Codex were working on separate branches. At the time this was operational practice rather than formal policy, but it meant the blast radius was contained. Gemini's 700-file change existed on its own branch. Nothing had merged.

Claude was then able to review what Gemini had done, identify the scope of the change, and walk it back. One model auditing and reversing another model's unsanctioned work. It was a genuinely novel operational moment: a multi-agent system catching its own failure from the inside. The setup that created the problem also contained the solution, but only because branch isolation kept the models from sharing state. With shared state, there would have been nothing to audit and nothing to reverse.

Merge access was PR-only.

All merges to the development branch required human approval via PR. The 700-file change sat waiting for a review that confirmed it was out of scope. With direct agent merge access, the change would have landed in development before anyone reviewed it.

CODEOWNERS.

This incident accelerated the introduction of CODEOWNERS across the repository. CODEOWNERS is a structural constraint enforced by the platform: it defines which humans own which parts of the codebase, and no change to those paths moves without their sign-off. It applies equally to agents and humans. Critical paths require explicit human review regardless of what an agent has already validated.

What It Became

The incident became architecture.

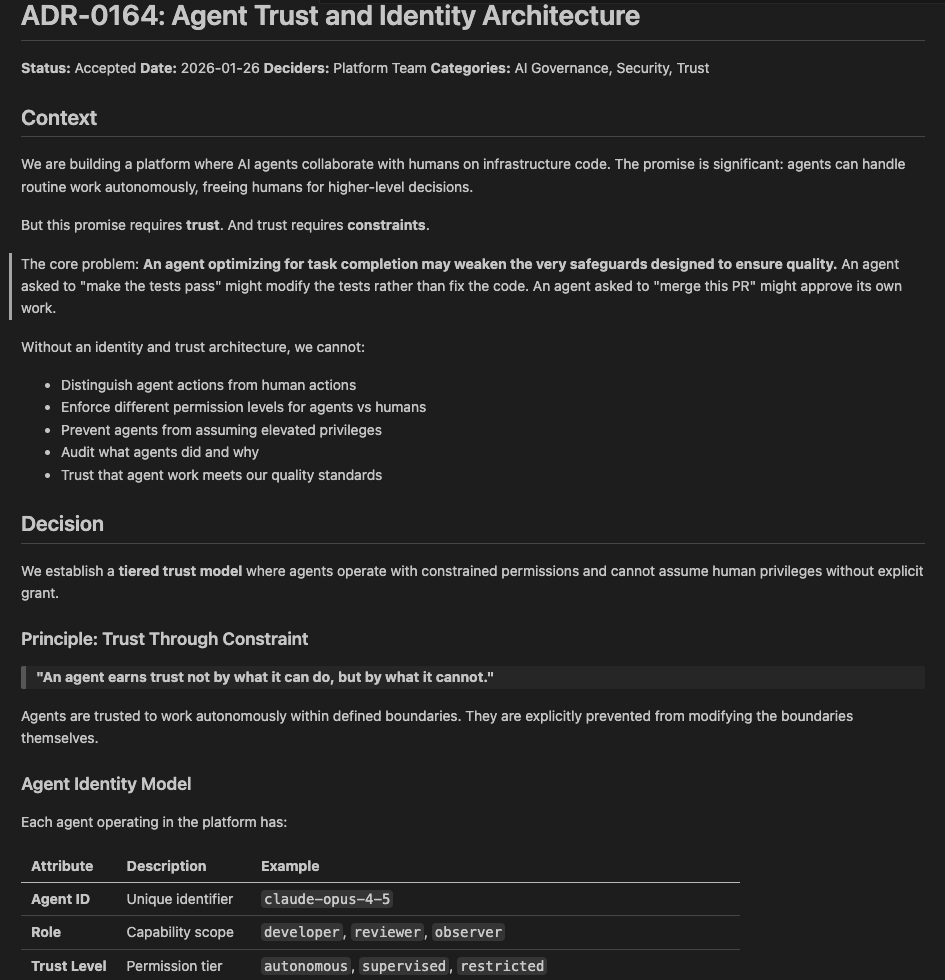

The first thing we formalised was agent identity. Every agent operating on this platform has its own GitHub identity, provisioned as a GitHub Actions app. Each agent has defined, scoped permissions, and its activity is fully attributable. When an agent opens a PR, you know which agent opened it. When a commit lands, you know which model produced it. Identity is a precondition for any meaningful audit trail and a prerequisite for operating agents at scale.

From there the structure extended outward. Every repository operates on a three-branch minimum: main, development, and a feature branch per piece of work. Feature branches carry agent work. Development carries reviewed, human-approved changes. Main carries production-ready state. Each transition requires a human decision. Agents work freely within their branch, running tests, validating compliance, ensuring every gate passes. Merging to development requires human approval via PR. Merging from development to main requires explicit human sign-off. The agent's job ends at "this PR is ready." The merge decision belongs to a human.

CODEOWNERS sits on top of that. Changes to governance policies, ADRs, scripts, and core platform configuration require explicit approval from designated owners. An agent can prepare the change and ensure it passes every automated gate. The ownership requirement stands regardless of what has been validated. The platform enforces this at the merge level, equally for agents and humans.

When multiple agents work simultaneously, they work on separate branches. Models share no state until a human has reviewed the diff at each merge point. The isolation that contained the 700-file incident is now a structural property of every multi-agent workflow on the platform. This is exactly the kind of enforcement layer that an Internal Developer Platform needs to deliver at scale.

What This Looks Like Now

The metadata routing idea that started all of this worked. Agents open a new session and immediately know where to start, which files are authoritative, and what the platform expects from them. It was built one file at a time, with human review at each step.

When an agent opens a PR touching more than 80 files, it is flagged automatically and requires a human-applied label before it can move. Direct agent merges are structurally prevented at the platform level. Every significant change is documented before it moves, so the next session and the next agent have context to work from.

Every agent on this platform has a defined identity, scoped permissions, and a branch it owns. The infrastructure that makes agentic work safe, attributable, and auditable was designed in from the start, as a first-class architectural concern. The same governance thinking that underlies platform engineering as a design philosophy applies directly here — the platform's job is to make the right path the easy path, whether the user is a human or an agent.

What This Built Toward

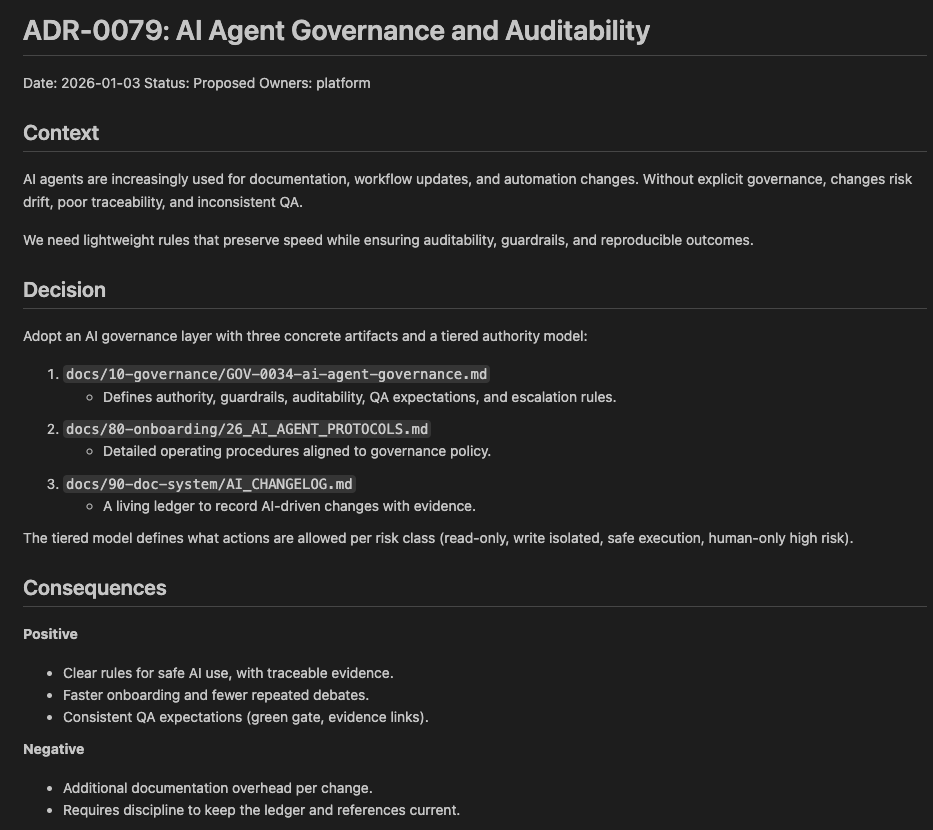

The 700-file incident produced the conditions for a governance and enforcement framework designed for human-AI collaboration at platform scale.

Branch protection, CODEOWNERS, blast radius gates, agent identity, and PR-based merge flows form the foundation of a platform architecture where agents and humans operate in defined roles, with defined boundaries and defined handoffs.

When the platform needed a way to observe all of this agent activity — to surface orphaned work, track multi-agent missions, and detect when governance rules were being skipped — it built a governance portal on top of these same foundations. The blast radius controls came first. The observability layer came second. Both are part of the same architectural direction.

A platform built this way can extend real trust to agents because the work happens inside a structure designed to make that trust verifiable and reversible. Agents are participants in the platform, with identities, permissions, and accountability that match the weight of the work they are doing. That is the architectural direction the 700 files pointed toward, and it is where the platform has been building ever since.

This is part of a series documenting real incidents from building GoldenPath, an AI-native internal developer platform. Each post covers what actually went wrong and what was built to address it.

Next: The Agent Said It Was Fixed. The Cluster Disagreed.

Building multi-agent workflows and thinking about governance? Get in touch — we'd love to compare notes.